Back in late December, I planted a Raspberry Pi camera at a cottage on Georgian Bay, in Northern Ontario, set to take a picture once every two minutes. I had been planning the shoot for a couple months prior to the deployment: There were two Raspberry Pi’s involved, in case one failed somewhere during the winter. One of the Pi’s was set to reboot once a week, just in case the software crashed but the Pi was still awake. I had also written some software for the time-lapse to ensure that pictures were only taken during the day time, and to try to maintain a balance of well-lit, consistent images over the course of each day.

In spite of all the planning, I had a sense that something would go horribly wrong, and, indeed, when we showed up to the cottage, the windows were completely frosted over. The cameras had to be placed inside, so we figured we would mainly see the back-side of an icy window when we retrieved the cameras. Or that the camera boards would literally freeze after about a week of sub-zero temperatures in the unheated cottage. Or that a raccoon would find it way in and gnaw through the shiny Lego cases. Or something else entirely unplanned for.

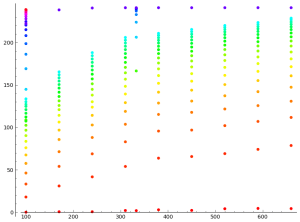

So it was a bit of a surprise when it turned out that the shoot went perfectly. We retrieved the cameras about a week ago, on May 7th, and found over 42,000 photos waiting for us on one of the cameras and somewhat fewer on the other. Both cameras had survived the winter just fine!

All told, I think the result was really cool! The video at the top is the ‘highlights’ reel, with all of the best days. It comes to 13 minutes at 18 frames per second. Turns out it was a fantastic winter for doing a time-lapse, with lots of snow storms and ice. There’s even the occasional bit of wildlife, if you watch closely. I’ll post the full 40-minute time-lapse on Youtube sometime next week.