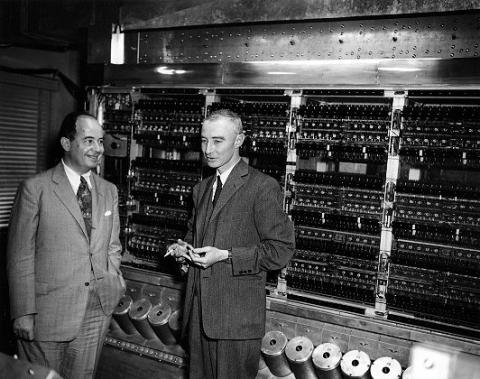

I’ve just finished reading ‘Turing’s Cathedral,’ an absolutely fascinating book on early computing. The book focuses almost entirely on John von Neumann’s ‘Electronic Computer Project’ carried out at the Institute of Advanced Study in the 40’s and 50’s. In fact, Turing makes only occasional and brief appearances in the book: it’s almost entirely a portrait of the IAS project, and the wide range of personalities involved.

For me, the book emphasized the importance of overcoming (or circumventing) boundaries in the pursuit of scientific progress. Von Neumann in particular became obsessed with applications (particularly after Godel’s theorem put an end to the Hilbert programme), and served as a bridge between pure and applied mathematics. Meanwhile, construction of the physical computer brought in a variety of brilliant engineers. It’s clear that the departmental politics at the IAS were still quite strong – the pure mathematicians didn’t have much regard for the engineers, and the computer project ground to a halt quite senselessly after von Neumann left the IAS. Dyson argues that Princeton missed an opportunity to be a world center for computing theory and practice as a result.

The computer project itself bridged numerous applications. One of the primary motivations was running calculations that would allow the construction of the first hydrogen bomb, which brought significant investment from the US military. But alongside these calculations, the computer was used for early attempts at numerical weather prediction, using the grid method originally proposed in the 1930’s, long before data collection and computational power were ready. Other projects included experiments with artificial life and cellular automata, and simulating nuclear reactions inside of stars.

All of this came just on the heels of the Manhattan Project, in which the divisions between applied and pure mathematics were broken down by the pressing need to win the war. Indeed, the ideas that came out of mathematics in response to the war would go on to drive many of the major scientific developments of the second half of the century.

And yeah, there’s been a lot of ink spilt in consideration of why it was that the mid-20th century scientific mega-projects were so successful in comparison to more recent efforts. It’s clear that the computer project didn’t exactly have the same laser focus of the Manhattan Project: the idea of building a universal computing machine is pretty inherently opposed to the notion of laser-focus on a single objective. There’s no formula for fundamental advances.

Diversity is helpful, though, and there’s very little to be gained from senseless silo-ing of academic disciplines. The proximity of a wide variety of problems in the computer project allowed a free circulation of ideas, allowing simple ideas from one area to flow into the fundamentals of another research problem.

Specialization is easy. You have a smaller group of people that you’re trying to impress, you don’t have to do the messy work of understanding new notations and formalisms. Once you’re on the frontier of knowledge, it’s easy to stay there, instead of learning your way to the frontier of an entirely different area. In contrast, when you pick up a new area, it’s difficult to tell what ideas have already been explored – what’s novel, and what’s so obvious that no one bothers to write it down. You lose your social network and any name recognition you’ve built up.

But notice that the advantage of diversity is in global improvements, while the advantages of specialization are largely personal. Greedy algorithms are prone to get stuck in local optima, and innovation is exploration in a highly non-convex space.

When I was making the choice to hop over to industry, these questions of improving diversity were pretty important. A huge number of essential figures in 20th century math and CS spent time in industry, whether in the WWII-era military or Bell Labs. Going out into industry has provided me a great opportunity to put my hands in the dirt and get a sense for the problems people (and corporate ‘people’) are actually struggling with, and the sorts of requirements that exist for a solution to actually be applicable.

The division between academic math and industry deprives both sides. Mathematics is deprived of a steady supply of problems, while industry loses out on the radically different perspective that a good mathematician can bring to a problem. Since there’s a steady flow of mathematicians into industry, though, academic math suffers more in the relationship.

It’s interesting to note that there’s a much better relationship between industry and academia in computer science: the supply of problems back to the academics is better, in part because the problems of academic and industrial CS are better aligned, and in part because in transition to industry isn’t considered a one-way door out of the university. Still, there are better and worse ways to keep roads open back to academia (apply for ‘research’ jobs over SWE jobs), and better and worse ways to hop into a new field (find a collaborator, rather than jump into the literature single-handedly). This is advice you rarely hear in pure math, though; I think there’s a lot to be gained by trying to keep these roads healthy and open.